Why Trust Is the Foundation of a Good Experimentation Platform

What's in This Post

Two Homies Talk Experimentation Platforms

Two of my favorite people in the experimentation space—my homie Shiva Manjunath and our very own Jonas Alves—recently chatted about experimentation platforms on Shiva’s stellar podcast, “From A to B.”

If you know Shiva, you know he doesn’t hold back. (And if you don’t know him, you should.) Right out the gate, he asked Jonas a delightfully blunt question about being the co-founder of a testing tool: “Why am I giving you thousands of dollars just to randomize traffic?”

And it’s a fair question!

Amazingly, Jonas answered in a way that surprised me. (Jonas surprising me is hard, because I’ve known him for more than 10 years.) Jonas replied: “When you buy an experimentation platform, you aren’t just paying for a randomization engine. You’re paying for trust.”

When you buy an experimentation platform, you aren’t just paying for a randomization engine. You’re paying for trust.

– Jonas Alves, Co-founder ABsmartly

Here’s my take on their conversation, and why trust should be the foundation for any experimentation platform that you buy (or build).

The Trust Triangle: How We Learn to Trust a Tool

Before we get into podcast takeaways, we have to ask: what actually is trust?

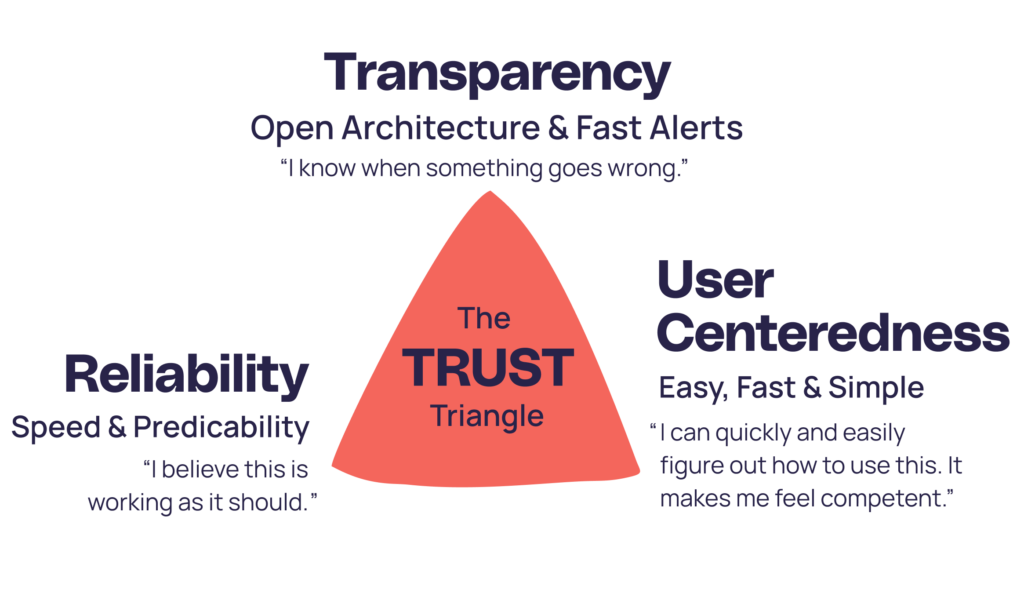

My favorite way to understand trust is through a model called “The Trust Triangle.” This model illustrates that we trust people when we consistently experience three things in our interactions with them:

- Logic. Their reasoning is sound, and they are competent.

- Authenticity. They are real with us, and aren’t hiding anything.

- Empathy. They care about us, and they want us to succeed.

Harvard Business Review May–June 2020

Believe it or not, we build relationships with software in the same way. When your team logs into your testing platform, they subconsciously run it through “The Trust Triangle”—but with a twist. Here’s how “The Trust Triangle” updates when people assess a platform’s trustworthiness (instead of a person’s)…

“Logic” becomes “reliability.”

Instead of asking, “Does this person seem competent and know what they’re talking about?” They ask, “Does the math in this tool check out? Do things make sense?” For example, when a data scientist pulls the raw numbers to verify the tool’s results, do they match? And do the data visualizations lead you to the correct conclusions? Or do they mislead you with colors and numbers without giving confidence intervals? If the “logic fails,” trust fades.

“Authenticity” becomes “transparency.”

Instead of asking, “Is what I see what I get? Are your motives pure?” They ask, “Does the tool hide the ugly stuff? Or does it show me when something goes wrong?” For example, if an experiment is broken, a trustworthy tool doesn’t pretend everything’s fine. It flags the error (such as a sample ratio mismatch, an influx of bots, or an audience mismatch) fast. You need to know the builders of the platform have your best interests at heart.

“Empathy” becomes “user-centeredness.”

Instead of asking, “Does this person care about me?” They ask, “Is this fast and easy for me to use?” For example, does the tool understand an experimenter’s day-to-day struggles and make the sophisticated stuff simple? Does it give you results quickly so you don’t waste any time? A trustworthy tool doesn’t make you feel stupid with scary data science jargon. And it doesn’t make you jump through hoops to answer your questions. It gives you contextual tips and smart recommendations to help you do your job better.

People don’t just trust a tool on day one. They learn to trust it through using it. They run tests, verify the data, and wait to see how the platform handles the tricky stuff. Once a tool proves it has solid logic, genuine empathy for its users, and total authenticity about errors, that’s when things get fun. That’s when people trust the tool enough to be bolder. And being bolder is when the A/B testing flywheel really starts spinning.

Trust In Experimentation Isn’t Just About Splitting Traffic

Like Shiva said in the podcast, anyone can build a basic tool that splits traffic. But as Jonas pointed out, if your team doesn’t trust the data, your whole program pays with velocity. Jonas saw this happen at Booking.com (where he built the company’s experimentation platform) a few times. He mentioned that even at a company with a mature experimentation culture, trust is fragile.

For example, he shared that one specific bug in Booking’s experimentation tooling accidentally invalidated three whole months of data. Imagine being an experimenter and finding out a quarter’s worth of your hard work was based on a mistake. That’s the kind of nightmare that causes people to completely check out. Learning from this experience, Jonas told Shiva in the podcast that, “It’s actually not about the dashboards and the tool itself. It’s about building trust. If you lose trust, people will not run experiments.”

It’s actually not about the dashboards and the tool itself. It’s about building trust. If you lose trust, people will not run experiments.

– Jonas Alves, Co-founder ABsmartly

Recovering Trust Through Radical Transparency

So, how’d Jonas earn that trust back? It wasn’t through a sales pitch. (He’s terrible at those). It was with proof.

First, Jonas and his team were super transparent about what went wrong. At ABsmartly, we believe you can’t hide it when something breaks, you’ve got to have the “authenticity” to flag it. That transparency is a core value we got from Booking.

How’d that transparency play out in Jonas’s story? His team reported every part of the recovery process. Then, they showed everyone the evidence that the issue was fixed. Not only that, they also let other people double-check their work.

They didn’t just say, “Trust us. It’s fine now.” They went a step further and added more checks and guardrails to ensure it couldn’t happen again. That approach was keenly in-line with one of our Booking mantras: “It’s OK to break things. Just don’t break something the same way twice.” (In other words: Learn from your mistakes. Then, prevent them from happening again.)

By showing the data behind the fix, they slowly saw the experiment velocity pick back up again. This story Jonas shared on “From A to B” is a great reminder that while trust takes years to build, it can vanish in an instant.

Babies Aren’t LeBron James, and You Can’t Expect Them To Be

It’s easy to look at tech giants like Microsoft or Booking.com and feel like your experimentation program kinda sucks. One of my favorite quotes that I often remind myself of is, “The first step to learning anything is not knowing.” Then, I follow it up with, “And I can’t expect myself to know how to do something that I don’t know how to do.”

The first step to learning anything is not knowing.

– Erin Weigel, Strategic Advisor, ABsmartly

The point? Mastery takes time. And you’ve got to give yourself (and your co-workers) grace.

Shiva brought up a fantastic sports analogy during the episode. He shared that you don’t hold a five-year-old learning to play basketball to the same strict rules as you would LeBron James. If a toddler double-dribbles, you let it slide because you’re happy they picked up the ball. As they get older, you teach them more and more of the rules. And when they get to the NBA? Then you go hard. That said, not everyone needs to get into the NBA. You can still get value from playing basketball by being good enough and following most of the rules.

So, when you’re just starting out, the goal is simply to get the A/B test wheel spinning. Teach your team to pick up the ball. It’s okay if your tests aren’t flawless. And it’s OK if people miss a foul shot. In both experimentation and basketball you just need to start somewhere so you can start learning and getting better.

You need to run experiments, and it doesn’t matter with what. You just want people to run experiment, because that will start the wheel spinning.

– Jonas Alves, Co-founder ABsmartly

How Automation Can Illustrate Authenticity

As your program matures (and your “toddler” goes pro), you need automated safety checks to keep your data clean. This is where a good platform really earns its keep.

Jonas specifically talked about the importance of features like Sample Ratio Mismatch (SRM) alerts. In plain English, an SRM alert is a built-in warning that traffic isn’t splitting correctly between your control and treatment groups. (Don’t know what an SRM is? Here’s an infographic I drew about SRMs to explain why they’re bad.)

Jonas mentioned that some experimentation tool vendors actually used to hide these alerts because they were afraid of looking broken. Instead, they let their customers make decisions based on garbage data. Not cool. Not proactively flagging alerts doesn’t show genuine care for customers and their goals. Because, again, transparency builds trust. And trust keeps people experimenting.

The “Build vs. Buy” Debate (and How It’s Changed Over Time)

Naturally, Shiva asked Jonas for his strongest argument on why a company should build their own tool instead of buying one.

Jonas admitted that years ago, he always used to tell his consulting clients to build in-house! Why? Because he knew they’d often need complex features most platforms didn’t offer. And also—the trust just wasn’t there. But now, times have changed. Today, he argues that the hidden costs of building are just too high for building your own to make sense. You don’t just build a tool once. It’s a regular, ongoing investment. You have to maintain it, keep up with new statistical methods, and manage a dedicated team just to keep it running. (Let alone keep it trustworthy!)

These days, it’s almost always better to buy a platform you trust. Then, if you want to, build your own custom extensions on top of it.

Four Practical Takeaways for Your Experimentation Program

I loved listening to this episode of “From A to B.” And what I got from it are a few practical ways Jonas and Shiva think that you can improve your experimentation program today:

- Just start somewhere.

If you have low maturity, don’t stress about perfection on day one. Just get some tests live to build the habit. - Pick the right metric.

Before you launch, make sure you’re measuring the right primary metric (and that it works properly) for your specific product or page. If the pipeline works perfectly, then you can trust the outcome. - Focus on hypothesis quality.

Once your testing pipeline is smooth, train your team to write stronger hypotheses. The best tooling setup in the world is worth nothing without customer research and critical thinking. - Track the program, not just the test.

Measure the overall health of your experimentation culture. Look at your testing velocity, the number of bad stops, and your data quality, instead of obsessing over individual test results. Spot the biggest patterns and strategically teach the lessons your teams need to learn next.

Links to the Full Podcast Episode

Interested in listening to the From A to B episode, too?

You can find it here on:

Or, if you want to check out ABsmartly (to see what Jonas built with our team) get a demo here.

Written By

Get a Demo

Check out ABsmartly in Action

If you've outgrown basic experimentation tools and need to ramp things up, fill out this form. We'll contact you to schedule a product demo.